The problem with "AI-native"

“We need someone who’s AI-native.”

If you’re a founder or revenue leader hiring for sales roles right now, you’ve probably said some version of this. Maybe you’ve put it in the job description. Maybe you’ve told your recruiter it’s a priority.

And you’re not wrong to want it.

The best sales teams are moving faster, managing larger pipelines, and operating more efficiently because of how they use AI. The gap between teams that have figured this out and teams that haven’t is widening every quarter.

But here’s the challenge: “AI-native” remains one of the most poorly defined requirements in hiring. Everyone agrees it matters. Almost nobody can articulate what it actually looks like in a sales hire. And the definitions keep shifting as tools evolve.

This isn’t just a semantics problem. When you can’t define what you’re looking for, you can’t assess it. And when you can’t assess it, you end up either:

- Hiring people who talk a good game about AI but are really just using ChatGPT as a fancier Google search

- Passing on strong candidates because they didn’t name-drop the right tools

- Over-indexing on AI fluency for roles where it may not even be the highest priority

In our AI Fluency Rubric, we mapped what each tier of AI adoption looks like across GTM functions. Sales, marketing, RevOps, customer success. That rubric gave teams a starting point for understanding the spectrum. But we kept hearing the same question:

"Okay, but what does this actually look like when I'm sitting across from a sales candidate?"

This guide is our answer.

We talked to four sales leaders who have built high performing teams and are actively thinking about about AI impacts them in different GTM contexts:

- Kyle Norton, CRO at Owner.com (Series C, restaurant tech, high-velocity SMB)

- Jon Studham, VP of Sales at Spara (seed stage, AI-native product)

- Lauren Halper, Head of Business Development (Enterprise) at Google Cloud

- Mark Roberge, Co-Founder at Stage 2 Capital and former CRO at HubSpot, author of Science of Scaling

What we found: there is no single right answer. What "AI-native" should mean for your team depends on the role, your stage, and how your org is structured. A seed-stage founder hiring their first AE has a very different calculus than a Series B CRO scaling a 30-person sales org.

So this isn't a prescription. It's a framework. A way to get specific enough about what you actually need that you can hire against it.

Athletes, not specialists: the new sales profile

Before we break this down by role, there's a structural shift we’re seeing reshape GTM hiring across the board.

For the last 20 years, GTM teams got more and more specialized. SDR sets the meeting. AE runs the cycle. CSM handles the renewal. RevOps keeps the machine running. That specialization made sense when the cost of being mediocre at any one of those things was high.

AI changes that math.

"If you have an A+ skill in one area and a C+ skill in another, AI can turn that C+ into a B+ or an A-. Because of that, the benefits of full-cycle will start to outweigh the benefits of specialization." — Mark Roberge, Co-Founder at Stage 2 Capital

What this means practically: the future AE probably looks more like an athlete than a specialist. Someone who can prospect, run a cycle, manage the relationship, and lean on AI to cover the parts that used to require a dedicated teammate.

This is especially true at early stage. If you're pre-Series A, you probably can't afford to hire a specialist for every motion. You need generalists who use AI well, because AI is what lets them cover ground a specialist used to own. The "athlete who uses AI well" profile is not a nice-to-have at this stage. It's the whole game.

You don't have to redesign your org chart today. But when you're hiring, it's worth asking whether you're hiring for the role as it exists now, or the role as it's going to exist in 18 months. The answer changes who you say yes to.

Why AI fluency doesn't replace sales fundamentals

The most consistent message from the operators we interviewed wasn't about AI at all: AI fluency does not replace sales fundamentals. It amplifies them.

"Curiosity and control still reign. Being able to control a call, clear next steps, being comfortable with discomfort in discovery. Those are the still undefeated things that you need."

— Jon Studham, VP of Sales, Spara

This is the lens we use for every sales hire, and it’s the foundation of everything in this guide: you’re always evaluating for these two dimensions at the same time:

- Commercial craft. Pipeline discipline, deal management, discovery, negotiation, forecast rigor, follow-through. The fundamentals that have always mattered and still do.

- AI fluency. How someone uses AI tools to amplify that craft. Research, outreach, deal analysis, pipeline management, competitive intelligence. The tools keep changing but the question is always the same: are they using AI to get measurably better outcomes?

The best AI-native sellers have both. And when they don't, AI fluency on its own is not going to save the hire.

The last time the industry had a moment like this was around 2010, when "data-driven sales org" became the thing every founder wanted. A lot of companies overcorrected. They hired CROs who were essentially RevOps leaders wearing sales leadership clothing, then realized those leaders couldn't hire reps, couldn't coach reps, and couldn't close executive-level deals. The right answer turned out to be both. A commercial leader paired with a strong systems partner.

Mark Roberge put this in historical context, and it's something to pay attention to:

"There's a real risk right now of swinging too far because of the FOMO. People are going to hire AEs who are essentially former programmers with data science degrees. And it's going to flop. You still need to hire great reps and coach great reps."

— Mark Roberge, Co-Founder at Stage 2 Capital

We are watching the same pattern play out with AI. Don't let a flashy answer about Claude skills paper over a gap in commercial craft. And don't pass on a great operator because they haven't built a custom GPT yet.

The role-by-role framework

Start by taking stock of where your company actually is on the AI curve. It's tempting to go find the most AI-fluent person on the market, especially when it feels like everyone's racing to keep up. But the right hire is the one whose level matches what your company actually needs right now.

For most founders we work with, that means hiring reps who can build their own AI workflows. If you're seed to Series B, a dedicated applied AI team probably isn't in the budget yet, and that's okay. It just means your reps are your applied AI team. They need more fluency themselves because no one is building it for them. That's why Jon Studham at Spara screens for AI fluency directly in the interview.

On the other end of the spectrum, if you're well-resourced enough to centralize, you have more options. Kyle Norton at Owner.com built a dedicated applied AI team that ships tools to reps:

"I don't actually test at all for AI literacy at the rep level because we build it all ourselves. We just give it to you on a platter in Salesforce or whatever surface you're in."

— Kyle Norton, CRO at Owner.com

That model works beautifully at Owner.com. It's not realistic at a 20-person company, and it doesn't need to be. But it's worth knowing the option exists, because as you scale, you'll want to think about whether AI capability lives in every rep's workflow or in a central team that ships tooling to them.

So the rule of thumb for how to read the rubrics below:

- Early stage or no central AI function (most common). Your bar for AI fluency has to be higher at the rep level, since the person you hire will be building their own workflows. If they can't, you'll miss out on the AI leverage your competitors are starting to get.

- Well-resourced and centralized (Series B+). You can hire more AI-Curious candidates for rep-level roles and teach them on the job, because you have the infrastructure to hand them. Push your bar up at the leadership level, where AI strategy matters most.

How do you hire an AI-native SDR or BDR?

SDR and BDR is where AI is hitting sales hardest right now. Prospecting, research, personalization at scale, outbound email, all of it is being rebuilt. But whether your SDRs need to be the ones wielding these tools depends on your setup.

There's a bigger shift happening underneath the tooling question too. The role itself is changing. Lauren Halper has led sales development orgs for nearly a decade, across Bitly, CB Insights, and now Google Cloud.

Her prediction: BDR teams are going to get smaller and more senior. AI absorbs the busy work (generic event blasts, wide-net campaigns), and human BDRs focus on narrow, highly personalized outreach to priority accounts.

That shift changes who you should be hiring.

"I'm more hesitant to hire BDRs without any experience like we did in the past. The role is going to require a little more experience and be less of a role that people jump into right after college."

— Lauren Halper, Head of Business Development (Enterprise), Google Cloud

Download a copy of the rubrics here (no email required)

Interview questions to ask SDR/BDR candidates about their AI fluency:

- Walk me through your outbound process end to end. Where does AI fit in?

- Show me an outbound email you're proud of that was AI-assisted. What was your process?

- If I gave you a new market segment and asked you to build a list of 500 prospects with personalized first touches in 48 hours, how would you approach it?

- What non-mainstream AI tool are you using right now?

- What AI tool have you tried and stopped using, and why?

One quick signal that separates the tiers fast is whether the candidate pays for their own tools. It's a small filter but a reliable one:

"If you don't have a Pro license, I probably wouldn't talk to you. Because you're not using the capabilities of the thinking and reasoning model underneath the hood."

— Jon Studham, VP of Sales, Spara

That works at a seed-stage company where your reps need to be operators. It does not need to be a hard filter at every company. But it tells you something about whether AI is actually part of how they work or just a line on a resume.

How do you hire an AI-native AE?

For AEs, AI fluency shows up across the entire deal cycle. Discovery prep. Competitive intel. Business case development. Deal strategy. Pipeline management. The best AI-native AEs aren't just faster at outbound. They are more prepared, more strategic, and more efficient at every stage.

But here's where the two-dimensions lens matters most. An AI-native AE who can't walk a deal, doesn't know their pipeline math, or can't hold a discovery call is still a bad hire. Commercial craft is the foundation. AI fluency is the accelerant.

Download a copy of the rubrics here (no email required)

What the AE Job Actually Looks Like Now

If you're hiring AEs, the job itself has changed. Mark Roberge makes this concrete:

"In the pre-AI world, prepping for a first meeting was quite a bit of work. Reading 10Ks, going through social media feeds. In the post-AI world, it's not that difficult to use AI to accelerate your ability to grasp the context of the buyer, and even to practice with the buyer.

A simple exercise: I tell the rep to go into the LLM and say, 'I have a meeting with Danielle tomorrow. Let's practice. Why would she want to buy my product? What would be some ways she might describe the problem? Who's my competitor and why would I be a better fit?' These are just the scenarios a good seller is preparing for anyway. AI deepens the preparation."

— Mark Roberge, Co-Founder at Stage 2 Capital and former CRO at HubSpot

This is what to assess for. You're not looking for someone who can do this work manually. You're looking for someone who already thinks this way about prep: what can I offload, what do I need to hold in my head, and how do I use AI to sharpen my discovery before I'm sitting across from the buyer?

Which is also why the profile of the ideal AE is shifting. The tenured President's Club rep from a name-brand competitor used to be the default hire, and it's not always the right call anymore. Kyle Norton has made a deliberate pivot at Owner.com:

"I have always erred on the side of potential over previous pedigree. I'd rather have really high potential and tons of energy, someone on the upslope of their career, versus somebody who's got ten years and is really super established."

— Kyle Norton, CRO at Owner.com

His reasoning: tenured reps at high-velocity companies tend to cruise. They've built an established book at their current company, the best deals come to them because they're senior, and the learning motor slows down. That pattern shows up in hiring outcomes, not just in theory.

This doesn't mean don't hire experienced reps. It means don't hire for experience. Hire for the DNA (intellectual horsepower, drive, resilience, coachability) and make sure experience is paired with a learner's mindset. The reps who win in the next three years are the ones who keep adapting as tools evolve, not the ones cruising on the playbook that worked in 2021.

Interview questions to ask AE candidates about their AI fluency:

- Tell me about your most complex deal in the last six months. Where did AI play a role in how you managed it?

- Walk me through your actual prep process for a first discovery call.

- Have you built any tools, workflows, or systems that make you more productive? Show me.

- If you had no inbound for 30 days, walk me through the math and the plan for hitting your number.

- Bring a redacted artifact from a real deal. Walk me through the key moments and how you navigated them.

That last one can be one of the most revealing exercises you can run in an AE interview, if you know how to pressure test it:

"Salespeople are great at lying. Bring in a redacted CRM screen or email or something along those lines where I know it's real. Then we'll walk through it and I'll pressure test. When did you feel like this was going south? When did you secure your champion? How did they help you?"

— Jon Studham, VP of Sales, Spara

The artifact gives you ground truth. The follow-up questions reveal whether they actually managed the deal or just closed it.

How do you hire an AI-native Sales Leader?

At the leadership level, AI fluency shifts to include strategy, not just execution. A VP of Sales or CRO needs the vision to understand how AI reshapes a sales org, the judgment to make build-vs-buy decisions, and the ability to chart the path.

This is the one place where we'd argue the AI-fluency bar has to be the highest.

"I'm hiring a VP, and AI fluency is extremely important. They need to build the strategy and chart the path. My directors don't have to be super AI-pilled because it's all done for them. But the VP has to have an eye for it."

— Kyle Norton, CRO at Owner.com

Download a copy of the rubrics here (no email required)

Interview questions to ask Sales Leader candidates about their AI fluency:

- How do you think about building an AI-forward sales organization? What's your vision?

- Should AI capability be centralized in a dedicated team or distributed across the org? What's your experience, and what would you do here?

- Tell me about a build-vs-buy decision you made on AI tooling. What happened?

- How do you evaluate whether an AI investment is actually working? What metrics?

- Walk me through how you'd restructure our sales process around AI capabilities in your first 90 days.

That second question is the one we'd pay closest attention to. Centralized vs. decentralized is one of the most important strategic calls a sales leader makes right now, and it is where we saw the sharpest disagreement across the people we interviewed.

Kyle argues centralization wins at scale. Dedicated applied AI people build production-grade tools and hand them to reps. Decentralized adoption, in his view, tends to produce 10 different versions of the same hack, none of which scale.

Jon, running a seed-stage team, expects his reps to be building their own workflows. He can't afford to centralize it, and he doesn't want to. He wants operators who treat AI as part of their job.

Neither is wrong. What matters is that the leader you're hiring has a clear point of view on which model they're building toward, and why it fits your stage. If they can't articulate that, that's a red flag.

One more thing we want to call out is Mark Roberge's prediction about where the CRO profile is heading:

"Our future CRO might look more like our current RevOps person. If you had an A+ RevOps team and a C+ human sales team in a mature environment, I think that high-performing RevOps team will outperform."

— Mark Roberge, Co-Founder at Stage 2 Capital

How to actually run the interview

Before we get into tactics, there’s an uncomfortable truth: you can’t assess AI fluency if you don’t have it yourself.

“You can’t know who’s AI-native if you aren’t. You have to have the depth in order to understand the nuances.”

— Kyle Norton, CRO, Owner.com

This doesn’t mean every interviewer needs to be an AI power user. But at least one person in your interview loop needs to be deep enough to evaluate the quality and sophistication of a candidate’s answers. If nobody on your team can distinguish between a custom GPT and a Claude Project, or explain why meta-prompting matters, you’re going to struggle to assess candidates accurately.

Practical gut check: if you yourself are using AI mostly to polish emails and summarize meetings, you're probably AI-Curious. That's fine, but you need to bring in someone who is further along for the AI portion of the interview. Borrow a technical friend, a portfolio-company peer, or an advisor. Do not try to fake it.

Step 1: The workflow deep-dive

Start with an open-ended question: “Tell me how you’re using AI day to day.” Then keep going deeper. The key technique is simple: keep asking “what else?”

Candidates with surface-level fluency will have one or two answers and then run out of material. Genuinely AI-native candidates will keep going. You’ll eventually have to stop them.

As they talk, listen for:

- Specificity: Do they name exact tools, models, and workflows? Or is it vague?

- Depth: Can they explain why they chose one approach over another?

- Evolution: Have they changed their approach as tools have improved?

- Outcomes: Can they connect their AI usage to measurable results?

Step 2: What case studies look like now

The old take-home case study (go away, come back with a 30-60-90 or a written analysis) is not useful anymore. Any candidate can produce a polished deliverable with AI. The case study itself no longer differentiates.

But "case studies are dead" is the wrong takeaway. The better takeaway is to split your assessment into two parts:

The prep (AI-assisted). If you want them to use AI on the job, you want to see how they use it to prep. Give them a real, time-boxed project. For an SDR: "Research the top 500 companies in this segment, identify decision makers, and give me 10 personalized outbound emails. Two hours." For an AE: "Here's a real deal we're working. Build the mutual action plan and draft the first champion email. One hour." How they approach it tells you where they actually sit.

The live (AI can't help). Design specifically for the skills AI can't cover in the moment. An objection roleplay where they don't know what's coming. A discovery curveball. A final-committee presentation where the CFO asks a hard pricing question.

"There are situations where a rep has to think on their feet. If we're in the middle of a call and the buyer presents information I want to respond to, you're not going to use AI effectively. I want to replicate those scenarios in my role plays."

— Mark Roberge, Co-Founder at Stage 2 Capital

Something to dig deeper on here specifically: does the candidate actually know what's in the AI output they brought you? Reps who did the research themselves have it embedded. Reps who delegated it entirely to AI often haven't internalized it, even if the brief looks great.

Ask them to open the meeting, not just show you the brief. If they can't, they used AI as a shortcut instead of a tool. That's the difference between AI-Active and AI-Native, and this is exactly where it shows up.

Step 3: Values and mindset fit

Beyond skills and tools, you’re looking for a mindset. The best AI-native sellers share a few characteristics:

- Relentless curiosity: They’re constantly experimenting with new tools and approaches. They have opinions about niche tools that most people haven’t heard of.

- Builder mentality: They don’t just use tools. They create workflows, systems, and processes around them.

- Outcome orientation: They measure the impact of what they build and kill things that don’t work.

- Healthy skepticism: They know the difference between a cool demo and something production-grade. They’ve built things that didn’t work and can talk about why.

Making the hire: positioning and closing

If you've done the work above, you've identified a candidate you really want to hire. Now you have to close them.

Over the last year, we’ve seen that AI-native candidates are harder to close than traditional sales hires. They have more options, and they have higher standards for the environments they'll work in. Here's what we see on our side of the table.

What they actually care about

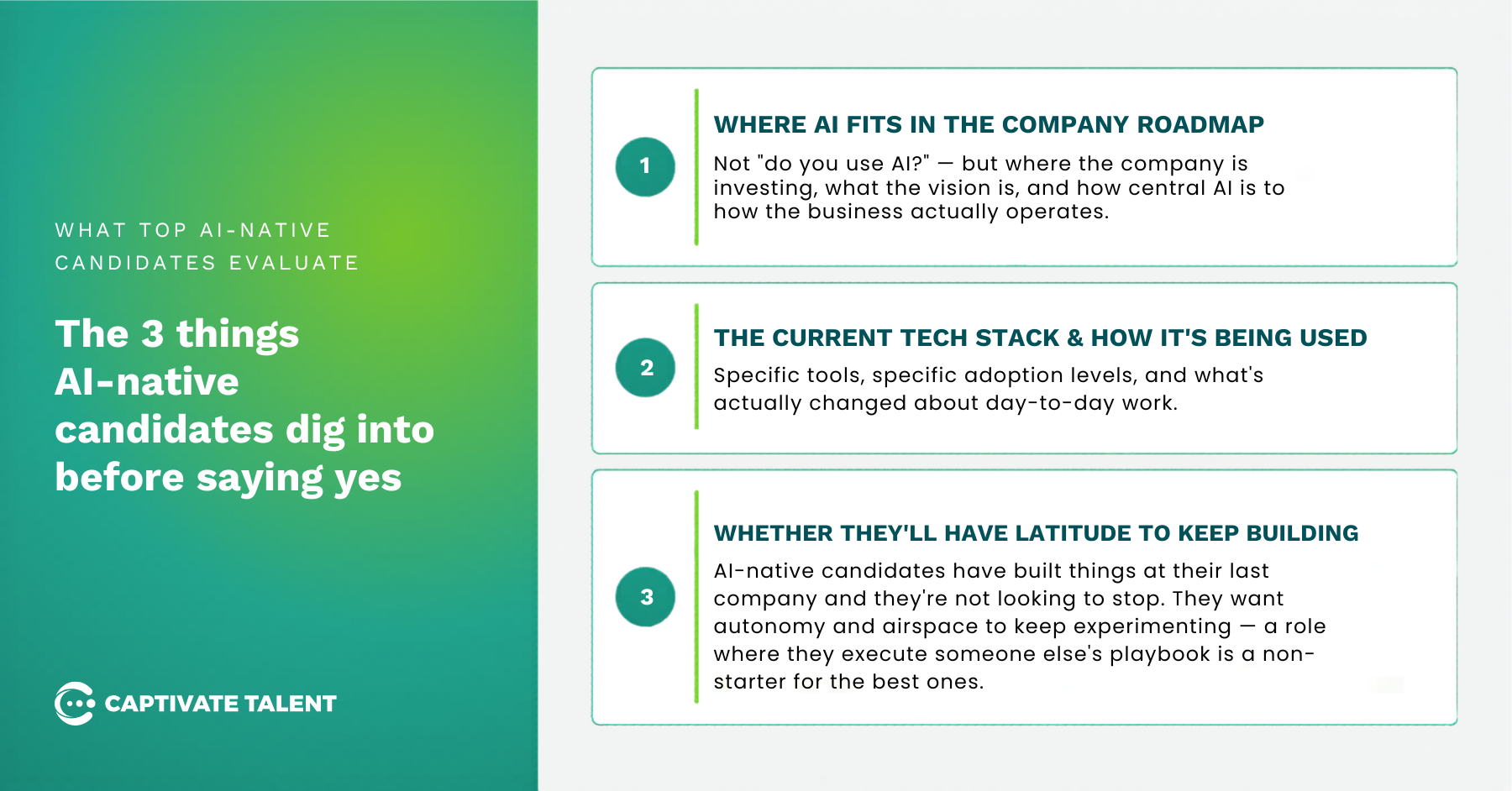

From our placement data, the best AI-native candidates are asking about three things when they evaluate a role:

- Where AI fits in the company roadmap. Not "do you use AI?" but where is the company investing, what's the vision, and how central is AI to how the business actually operates?

- The current tech stack and how it's being used. Specific tools, specific adoption levels, and what's actually changed about day-to-day work.

- Latitude to keep building. This is the one founders miss most often. AI-native candidates have built things at their last company and they're not looking to stop. They want autonomy and airspace to keep experimenting. A role where they execute someone else's playbook and nothing else is a non-starter for the best ones.

"AI-native candidates are looking at how AI fits into a company roadmap, what tech stack you're using, how it's been used to optimize workflows, and whether they'll have latitude to continue building and evolving their own work."

— Kendra Morales, Head of GTM & Operations, Captivate Talent

Don't oversell your own maturity

The biggest mistake we see, especially at the leadership level, is hiring managers overselling where their company actually is on AI.

The best AI-native candidates are skeptical of anything that sounds too polished. They've been in enough companies to know that "AI-forward" in a job description often means "we have enterprise ChatGPT." If your product is AI-native but your own sales team's adoption is rudimentary, say so. They'll respect you more for naming it than for papering over it.

"The most judgmental hiring managers always have the most skeletons. Tell candidates exactly where you want to be, where you think there are breaks in the system, and work backward from that point."

— Casey Erickson, Head of Executive Search, Captivate Talent

Three things to be specific about when you pitch:

- The gap you're hiring them to close. Why does this role matter right now? What's broken, and what will they have autonomy to change?

- What's fair game to rebuild, and what isn't. Candidates want to know which systems they can blow up and where your sacred cows are. With how much tool fatigue is out there, give them airspace to make their own calls on what stays and what goes.

- Why your metric matters. If you need a retention bump or a revenue spike, explain the why. What's broken today, and why haven't you been able to fix it? That context is what lets a great candidate actually decide if they want the job.

Compensation is shifting

Two things are happening in comp right now, and both are worth paying attention to.

On base. There's a real premium starting to form with AI-native talent – we’re seeing some companies pay roughly 20% above what they'd pay for the same function without it. The premium is most visible in roles where AI fluency directly compounds, where one fluent operator can do the work of three through better tooling. It’s most pronounced in CSM and RevOps roles today, but it's quickly spreading into sales.

On variable. The best candidates want to be paid for outcomes, not just base. AI-native sellers tend to bet on their own abilities. They want incentives tied to efficiency gains, margin improvement, and the specific behaviors you're trying to multiply. Rather than just stacking base comp, think about variable structures designed around what a great AI-native operator can actually move, versus what a traditional seller could.

"They're going to want to be incented at every efficiency gain, and EBITA improvement. They are going to be experts in measuring progress, and will expect to be paid for that."

— Casey Erickson, Head of Executive Search, Captivate Talent

Together, AI-native comp packages often look slightly different from traditional sales comp. A modest base premium plus a more creative variable structure tied to the specific leverage you're hiring them for.

Before you start hiring

The hardest part of this isn't the rubrics. It's the honesty. Most companies don't actually know what they need from an AI-native hire. They know they want one. There's a difference.

If you're hiring right now, start by getting specific. What does AI-native mean for this role at your stage? What can you teach versus what do they need to walk in with? Where's your own bar, and is anyone on your team fluent enough to actually evaluate it?

Once you've answered those, the rubrics in this piece are the easy part.